High-Performance Computing (HPC) and Artificial Intelligence: What Challenges Do They Pose for Data Center Infrastructure?

For the past 20 years, high-performance computing (HPC) has been revolutionizing the way we use technology, both through its ever-evolving power and its rapid adoption across a wide range of industries. Artificial intelligence plays a major role in these advancements and this explosive growth—there’s no denying it. In fact, AI and HPC are intrinsically linked. Yes, but AI combined with the widespread use of high-performance computing requires enormous resources. This raises questions about data center infrastructure: how can such power be accommodated? What are the impacts of such massive resources? What technologies (DLC, high-density PDUs, etc.) enable us to stay on track?

High-Performance Computing (HPC): What Is It?

HPC, or high-performance computing, refers to the use of supercomputers and parallel computing systems to perform extremely complex, large-scale, high-speed computations. These systems are capable ofprocessing trillions, if not quadrillions, of calculations per second.

High-Performance Computing is thus able to provide extremely precise solutions to problems in science, engineering, and data analysis.

Today, these computations make it possible to simulate laboratory tests and experiments that did not always rely on ethical methods. This is the case, for example, with animal testing, which was once widely used in the field of cosmetology.High-performance computing has now rendered these practices virtually obsolete.

The Role of Artificial Intelligence in HPC

Just a few years ago, HPC was used only sporadically by research centers, certain specialized industries, and universities. Today, the use of HPC has become widespread.

This phenomenon is due to artificial intelligence, which has expanded and revolutionized the field of HPC. To be specific, one point must be emphasized:high-performance computing and AI are intrinsically linked.

Here are a few points supporting this claim:

→ AI speeds up calculations

In fact, using state-of-the-art algorithms based ondeep neural networks(DNNs)—which mimic the functioning of the human brain—computationally intensive tasks can be performed more quickly.

→ AI enables the optimization of computing resources

Artificial intelligence is thus able to determine the best way to distribute computational tasks across the various nodes of a supercomputer.

→ AI improves simulation algorithms

Machine learning—and especially deep neural networks—enable us to improve the accuracy of simulations of complex phenomena by adjusting the models based on newly integrated data.

→ AI enables large-scale data analysis

HPC alone generates a massive amount of data. AI has the resources needed to analyze these massive amounts of information.

For the past 20 years, high-performance computing (HPC) has been revolutionizing the way we use technology, both through its ever-evolving power and its rapid adoption across a wide range of industries. Artificial intelligence plays a major role in these advancements and this explosive growth—there’s no denying it. In fact, AI and HPC are intrinsically linked. Yes, but AI combined with the widespread use of high-performance computing requires enormous resources. This raises questions about data center infrastructure: how can such power be accommodated? What are the impacts of such massive resources? What technologies (DLC, high-density PDUs, etc.) enable us to stay on track?

High-Performance Computing (HPC): What Is It?

HPC, or high-performance computing, refers to the use of supercomputers and parallel computing systems to perform extremely complex, large-scale, high-speed computations. These systems are capable ofprocessing trillions, if not quadrillions, of calculations per second.

High-Performance Computing is thus able to provide extremely precise solutions to problems in science, engineering, and data analysis.

Today, these computations make it possible to simulate laboratory tests and experiments that did not always rely on ethical methods. This is the case, for example, with animal testing, which was once widely used in the field of cosmetology.High-performance computing has now rendered these practices virtually obsolete.

The Role of Artificial Intelligence in HPC

Just a few years ago, HPC was used only sporadically by research centers, certain specialized industries, and universities. Today, the use of HPC has become widespread.

This phenomenon is due to artificial intelligence, which has expanded and revolutionized the field of HPC. To be specific, one point must be emphasized:high-performance computing and AI are intrinsically linked.

Here are a few points supporting this claim:

→ AI speeds up calculations

In fact, using state-of-the-art algorithms based ondeep neural networks(DNNs)—which mimic the functioning of the human brain—computationally intensive tasks can be performed more quickly.

→ AI enables the optimization of computing resources

Artificial intelligence is thus able to determine the best way to distribute computational tasks across the various nodes of a supercomputer.

→ AI improves simulation algorithms

Machine learning—and especially deep neural networks—enable us to improve the accuracy of simulations of complex phenomena by adjusting the models based on newly integrated data.

→ AI enables large-scale data analysis

HPC alone generates a massive amount of data. AI has the resources needed to analyze these massive amounts of information.

“In reality, high-performance computing is nothing more than an underlying layer of artificial intelligence.”

Renaud de Saint Albin, CEO and founder of Module IT

The widespread adoption of HPC across various industries

High-performance computing is now used by virtually all industriesand an ever-growing number ofsectors. Here is a non-exhaustive list of sectors that rely on supercomputers for various simulations, analyses, and experiments, along with examples of use cases.

- Banking and finance:data analysis, financial modeling, blockchain management, etc.

- Scientific research:meteorology (particularly climate modeling), pharmaceutical research, biology, etc.

- Automotive industry:crash test simulations, vehicle design, performance optimization, etc.

- Energy:power plant simulations, research on renewable energy, etc.

AI and HPC: What Impact Do They Have on Data Center Energy Consumption?

The surge in power consumption by data centers

Let’s put this into perspective right away: the increase in power consumption isn’t proportional to the increase in power output—and thankfully so! Nevertheless, it’s still quite significant, and this can be explained by several factors:

→ The increase in the processing power of individual CPUs (Central Processing Units):

As increasingly powerful CPUs hit the market, power consumption naturally rises to allow them to operate at full capacity. This is due to the need to process a greater number of operations per second, which logically increases power usage with each computing cycle.

→ The surge in GPUs (Graphics Processing Units)

What is the key advantage of these new components? Their ability to handle multiple tasks and operations simultaneously. The downside, however, is the enormous amount of power these parallel processes consume.

→ Infrastructure densification

This is particularly true in terms of CPU and GPU power per U or rack unit. Over the past two decades, the average power consumption for a fully loaded 42U rack has ranged between 3 and 5 kW. The densest racks, meanwhile, have consumed between 10 and 12 kW.

And today, when it comes to “modest-sized” computing systems, the average power density per rack ranges from 30 kW to 60 kW. By “modest-sized” computing systems, we mean regional research centers, large automotive companies, and audiovisual production companies. This gives you an idea of the enormous evolution that has taken place in just 20 years!

Tiering: A Solution to the Problem of Power Consumption in High-Performance Computing?

As we know, the power requirements for data centers today are5 to 10 times higherthan what was typically used in traditional computing. In addition, there is another constraint:the high availability that these infrastructures must ensure.And this is anabsoluterequirement that many of the sectors mentioned above must strictly adhere to.

The use of differentiated tiering therefore appears to be an effective solution for reducing (or at least managing) electricity consumption.It allows infrastructure to be shut down from time to time without any adverse effects.

In the world of data centers, tiering refers to the system used to classify, evaluate, and certify the reliability and availability of a system. It is organized into a hierarchy of levels, ranging from the most basic(Tier I)to the most available(Tier IV).

For sectors requiring high availability, Tier III is most commonly used (a maximum of 1 or 2 hours of downtime per year). In other areas, one day of downtime may be considered an acceptable compromise, especially if it leads to significant cost savings (both OPEX and CAPEX).

What solutions are available to address the challenge of cooling high-performance computing racks?

Cooling data centers: a major challenge

Cooling is undoubtedly the technical area most affectedby the expansion of computing infrastructure in data centers.

Dissipating the heat generated by these enclosures is a major challenge, requiring sophisticated cooling solutions that can sometimes be extremely costly. These solutions can be bulky and may not always be very effective or safe in maintaining the desired temperatures within operational limits.

Cooling faces the complex task of addressing the following challenges:

→ Density per bay to be treated

We are referring here to the amount of computing power and, consequently, to the heat generated within a server rack. High density results in more heat being emitted in a confined space, thereby making cooling more challenging.

→ The total heat output to be handled

This refers to the total amount of heat generated by all the equipment in a data center. The challenge lies in effectively dissipating that heat to maintain an optimal operating temperature.

→ Optimizing environmental conditions

The goal here is to regulate the temperature and airflow inside the data center so that cooling can take place without damaging the equipment.

→ The need to maximize returns…

… through cooling solutions that minimize energy consumption while effectively dissipating the heat generated.

The higher the efficiency of the cooling solution, the lower the data center's operating costs will be (not to mention its environmental impact).

Cold doors: an effective solution, but with some limitations

In the late 2000s, active and passive cooling doors appeared on the market to manage heat dissipation from high-density server racks.

These systems are still in use today and are effectivefor power densities of up to 35 kW per rack (with a relatively low inlet water temperature: 12–13°C). There are many manufacturers of these systems. Examples include Schroff, Atos Racks, and Vertiv.

The cold door is a solution that has the advantage of not disrupting the existing environment: the vast majority of the heat generated by the HPC rack is treated at the source and does not affect the rest of the room’s environment.

Point of attention

The cold-door cooling system has a few limitations:

- Inlet water temperatures remain low, below 15°C, to ensure sufficient cooling capacity.

- In the event of a system failure, the temperature at the rear of the racks rises extremely quickly due to the low air volume,

- Redundancy among the various components of the door is limited (often restricted to the fans), which limits the overall availability of the system (due to maintenance or failure),

- The system is noisy, and its size must be taken into account when installing it in a confined space.

DLC (Direct Liquid Cooling) to the rescue!

Here, we will discussDLC (or Direct Liquid Cooling) as it applies to core cooling within a standard server cabinet or rack. We will deliberately exclude tank immersion, in which IT equipment is directly submerged in a cooling fluid.

We believe, in fact, that this technique has a number of limitations, despite its satisfactory theoretical effectiveness.

→ For more information, download our Technical Guide, where we discuss cooling methods in greater detail.

The purpose of liquid cooling is to remove heat directly from the server’s core components, where it is generated. Heat is removed by plates mounted on heat-generating components (CPU, GPU, RAM, power supply, etc.). Water or another coolant flows through these plates to extract the heat. There are various liquid cooling systems available.

However, there is currently no standard that allows for the interoperability of all these systems. As a result, cooling infrastructures remain heavily dependent on server manufacturers.

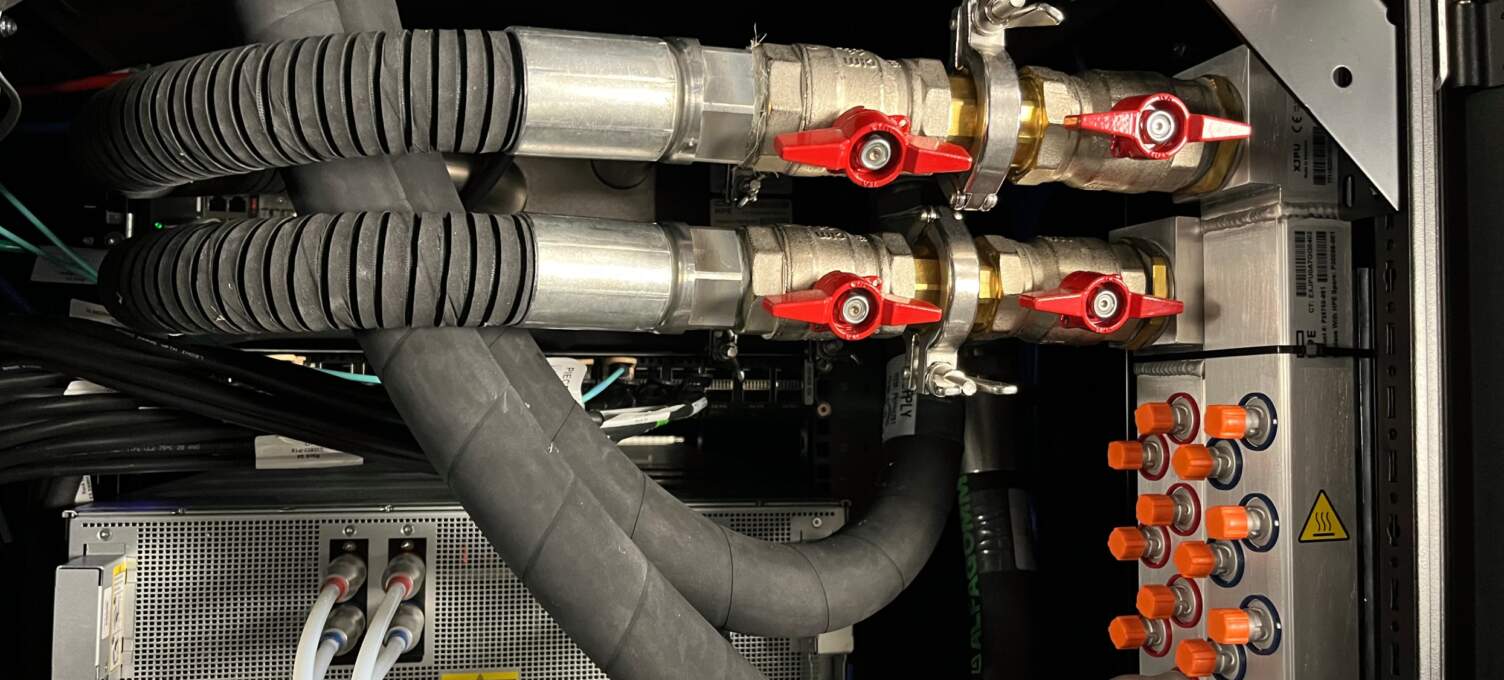

Composition and Types of a DLC Cooling System

A DLC cooling system consists of the following components:

- of thesite’s cooling orheat exchange system

- aCDU (orCooling Distribution Unit)that controls the flow rate, pressure, and temperature of the water or liquid used to cool the servers’ cores.

- arack manifold(supply and return) that distributes and recovers the coolant to and from the various IT devices.

- IT equipment(servers) designed for through-plate cooling (cold plates, liquid inlet and outlet).

Finally, it should be noted that there are two types of CDU on the market:

1.Rack-mountable CDU units(i.e., units designed to be installed directly into a rack or metal cabinet). These units handle the supply and return of coolant for a rack and can handle up to 200 kW while occupying only 4U of rack space.

© COOLIT SYSTEMS

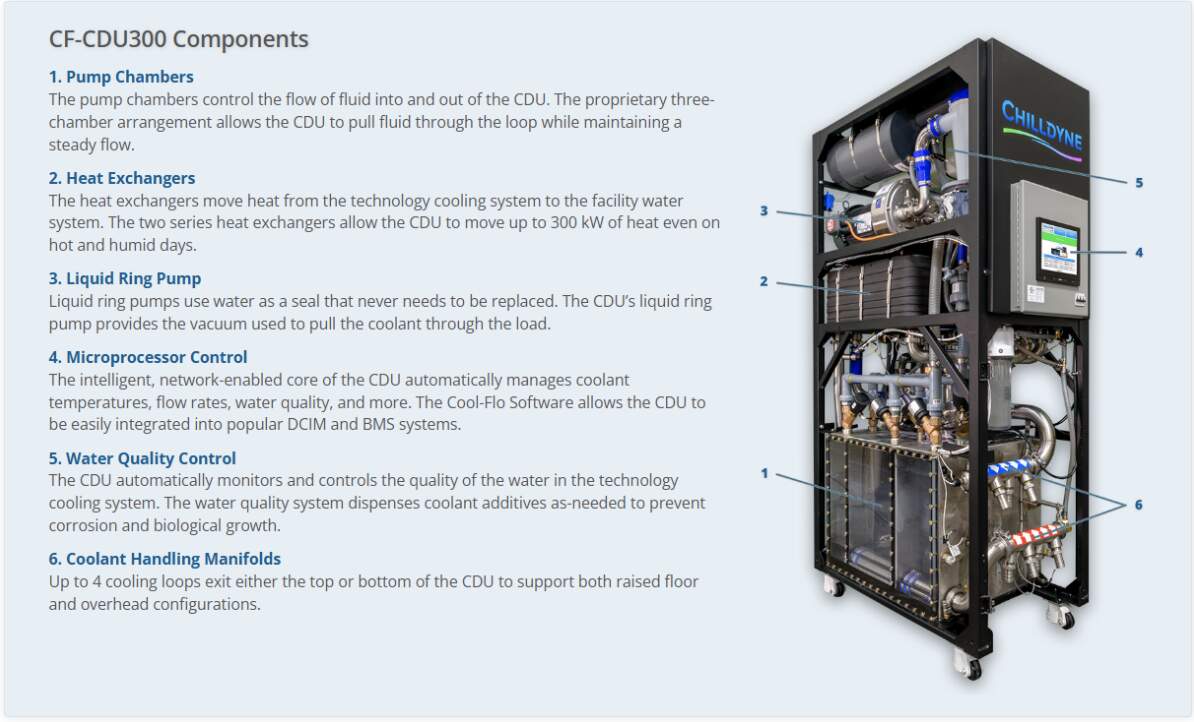

2.“Row-mounted” CDU units(i.e., those specifically designed to be placed in the aisles or rows of racks in a data center). These units can manage multiple racks at once, handling up to more than 1.5 MW, with a footprint roughly equivalent to that of a server rack (though the depth and width may vary depending on the manufacturer).

© 2024 Chilldyne, Inc.

Conclusion

We still have a lot to say about cooling methods. To learn more, stay tuned for our new content or feel free to contact us!

It’s also worth noting that we’re operating in a technological landscape that’s evolving at a pace that was unimaginable just a few decades ago. Will these systems, which we have reviewed at length, become obsolete within the next 10 to 20 years? With the rise of quantum supercomputers—which are starting to make headlines and will clearly be a game-changer—we’re tempted to say, perhaps… But only time will tell for sure!